By ICTpost Cyber Intelligence Bureau

The operating assumptions of cybersecurity are no longer shifting gradually—they are being overturned.

In early 2026, multiple security research teams disclosed the analysis of a sophisticated malware framework developed largely with artificial intelligence assistance. Built at unprecedented speed and scale, the system exceeded tens of thousands of lines of functional code and moved from design to deployment in a matter of days. Similar findings were independently documented by Check Point Research and IBM X‑Force, marking the first clear evidence of advanced AI‑generated malware frameworks moving beyond proof‑of‑concept into operational reality. [securitybo…levard.com], [research.c…kpoint.com], [ibm.com]

This moment matters not because the malware was technically revolutionary, but because it confirms a deeper transformation: artificial intelligence has become an accelerant of cyber conflict. We are no longer discussing isolated experiments. We are witnessing the early stages of an AI‑driven cyber arms race—one that compresses timelines, lowers barriers, and industrializes attack capability across borders.

What once required nation‑state budgets and elite teams is increasingly accessible to small, distributed actors with the right models and intent.

From Human Craft to Machine‑Accelerated Threats

Historically, advanced malware development demanded time, scarce expertise, and sustained collaboration. That equation has collapsed.

Security analysts documented multiple malware families assembled with AI‑assisted coding workflows that automated structural logic, documentation, and testing, allowing single operators to execute development cycles previously associated with organized teams. Check Point’s “VoidLink” and IBM’s “Slopoly” case studies both demonstrated malware reaching functional maturity in under a week. [research.c…kpoint.com], [thehackernews.com]

Experts caution against exaggeration.

“This is not artificial intelligence autonomously deciding to launch attacks,” one senior analyst observed. “Human intent remains central. What has changed is how rapidly intent can be converted into operational capability.” [securityweek.com]

In practical terms, AI now functions as a force multiplier—allowing small crews to build tools once confined to state‑sponsored groups or highly capitalized criminal syndicates.

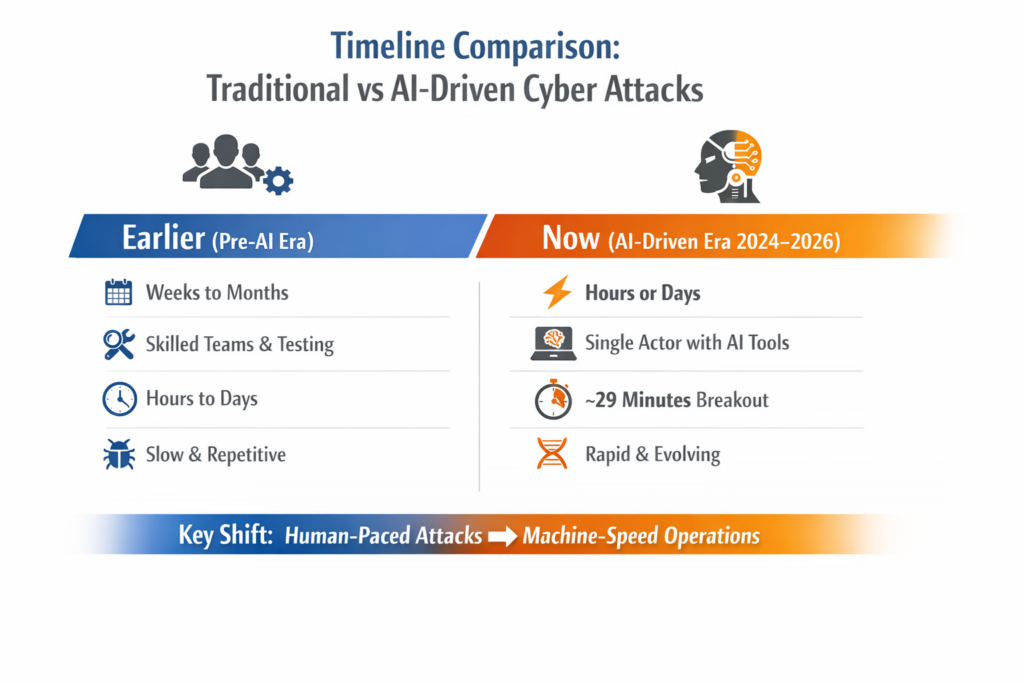

The Strategic Shift Is Velocity, Not Sophistication

The most disruptive impact of AI‑assisted malware is not increased technical brilliance. It is velocity.

Artificial intelligence dramatically reduces:

- Development timelines

- Dependence on deep technical skills

- Marginal cost per attack

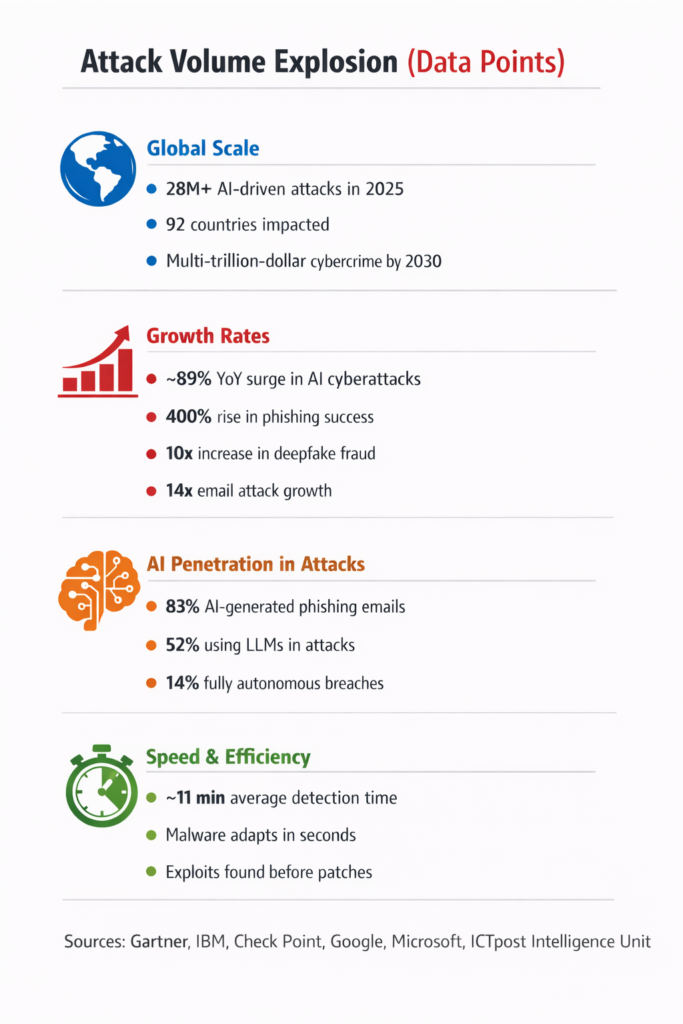

Across global threat telemetry, security vendors report faster campaign iteration, minimal reuse of malware, and a surge in disposable variants launched at industrial scale. Bitdefender’s 2026 findings in South Asia highlight an AI‑assisted model prioritizing scale over elegance—flooding detection pipelines through sheer volume. [itwire.com]

Global executive risk surveys now consistently rank AI‑enabled cyber threats alongside geopolitical instability, supply chain disruption, and economic volatility. Cybercrime is becoming faster, cheaper, and more scalable, steadily shifting advantage toward attackers who can operate at machine speed.

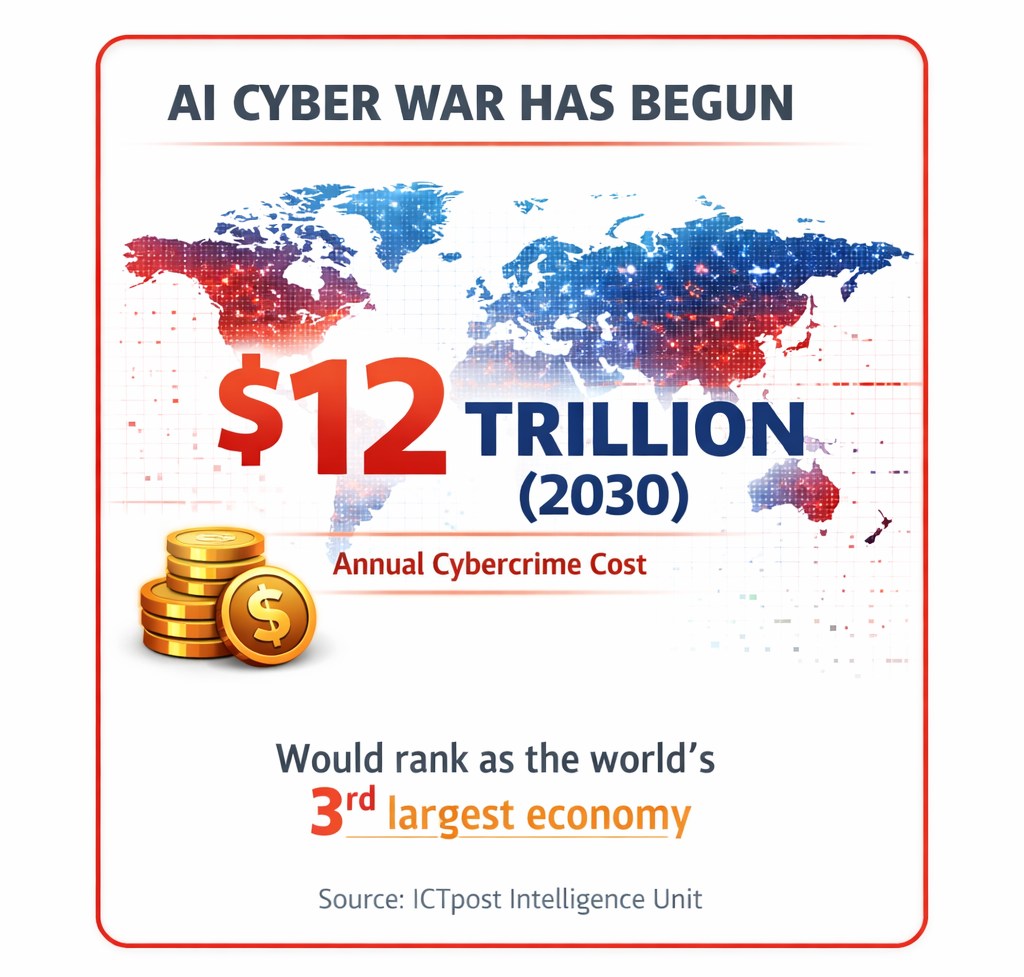

Cybercrime’s Economic Gravity

The acceleration of AI‑enabled attacks has profound macroeconomic implications.

By 2030, global cybercrime is projected to cost approximately $12 trillion annually, placing it among the largest ongoing economic drains worldwide.

This long‑term projection by the ICTpost Intelligence Unit, factors in direct financial losses, productivity destruction, intellectual property theft, operational disruption, legal costs, and systemic economic impact.

To put this in perspective, if cybercrime were measured as a national economy, it would rank among the world’s largest—surpassing the GDP of most countries. The costs extend well beyond stolen funds, undermining innovation incentives and eroding trust in digital infrastructure. [onlinecybe…degree.org], [statista.com]

An AI‑versus‑AI Battlespace

Cybersecurity is rapidly becoming an algorithmic contest.

Threat actors are already leveraging AI to:

- Generate highly targeted phishing content at scale

- Continuously scan for misconfigurations and zero‑day exposures

- Mutate malware behavior to evade static detection

- Adapt campaign logic in near real time

In response, defenders are deploying AI‑driven systems for:

- Continuous behavioral monitoring

- Anomaly and sequence detection

- Automated triage and containment

The result is a machine‑against‑machine environment where data quality, model training, and response latency matter as much as perimeter defenses.

“If attackers operate at AI speed and defenders do not,” a global CISO warned, “the battle is effectively decided before the alert is generated.” [threatdown.com]

India’s Digital Scale Meets AI‑Era Threats

For India, the stakes are unusually high.

As one of the world’s fastest‑growing digital economies—with Digital Public Infrastructure spanning payments, identity, taxation, healthcare, and logistics—India represents both a prime target and a critical case study.

AI‑enabled attacks increasingly intersect with:

- Financial systems and payment rails

- Manufacturing and industrial automation

- Healthcare platforms and hospital networks

- Energy distribution and transport

- Electoral and information ecosystems

Indian monitoring agencies have already observed increased AI‑assisted phishing, malware mutability, and rapid tool reuse across campaigns, reinforcing the challenge of protecting systems designed for mass inclusion and high availability. [itwire.com]

India’s experience is likely to shape global cyber doctrine—particularly for emerging economies balancing speed of digitization with resilience.

Cybercrime as a Global Supply Chain

Modern cybercrime now resembles an industrial ecosystem.

Attacks are assembled from:

- Malware‑as‑a‑Service platforms

- Initial access brokers

- Bulletproof hosting providers

- Dark‑market exploit exchanges

Interisle Consulting Group’s Cybercrime Supply Chain 2025 report analyzed over 26 million attack events, documenting a 60% year‑over‑year growth and identifying chokepoints in infrastructure provisioning exploited by criminals worldwide. [interisle.net]

The critical insight is structural: cybercrime behaves like a supply chain—and supply chains can be disrupted.

From Hacker Culture to Cyber Geopolitics

What began decades ago as experimentation has evolved into a domain of:

- State‑aligned cyber operations

- Large‑scale extortion economies

- AI‑driven influence and destabilization campaigns

The boundary between independent criminals, corporate operators, and state proxies is blurring, complicating attribution and escalation control.

“Hacking has moved from basements to boardrooms—and increasingly to battlefields.”

What Comes Next

AI‑assisted malware represents an opening chapter—not an endpoint.

Over the next several years, analysts anticipate:

- Greater automation across entire attack chains

- AI agents capable of real‑time tactical decision‑making

- Reduced human intervention in active operations [securityweek.com]

Leading defensive strategies are already shifting toward:

- Resilience over compliance

- AI‑enabled defense at scale

- Financial framing of cyber risk

- Continuous asset visibility

- Active disruption of attacker ecosystems

A New Cyber Reality

AI‑generated malware is not merely a technical milestone. It marks a strategic inflection point in how power, risk, and conflict manifest in the digital age.

We are entering an era in which:

- Cyber threats scale faster than human governance

- Human intent is amplified by machine execution

- The line between human‑led and automated operations dissolves

The defining question is no longer whether cyber threats can be eliminated—but whether systems can remain resilient in a world where intelligence itself has become industrialized.

editor@ictpost.com